Quick Summary :-

This blog explains the most widely used deep learning frameworks for building AI solutions. It covers core concepts, framework features, benefits, challenges & practical use cases. The guide also helps readers understand how to evaluate frameworks based on scalability, performance and development needs; Making it easier to select the right framework for AI projects.Want to build smarter AI applications faster? These deep learning frameworks make it possible by simplifying how complex neural networks are designed, trained & deployed across real world use cases.

Artificial intelligence is transforming industries like healthcare, finance, retail and manufacturing. Deep learning sits at the core of this transformation, powering systems that learn from data and improve continuously.

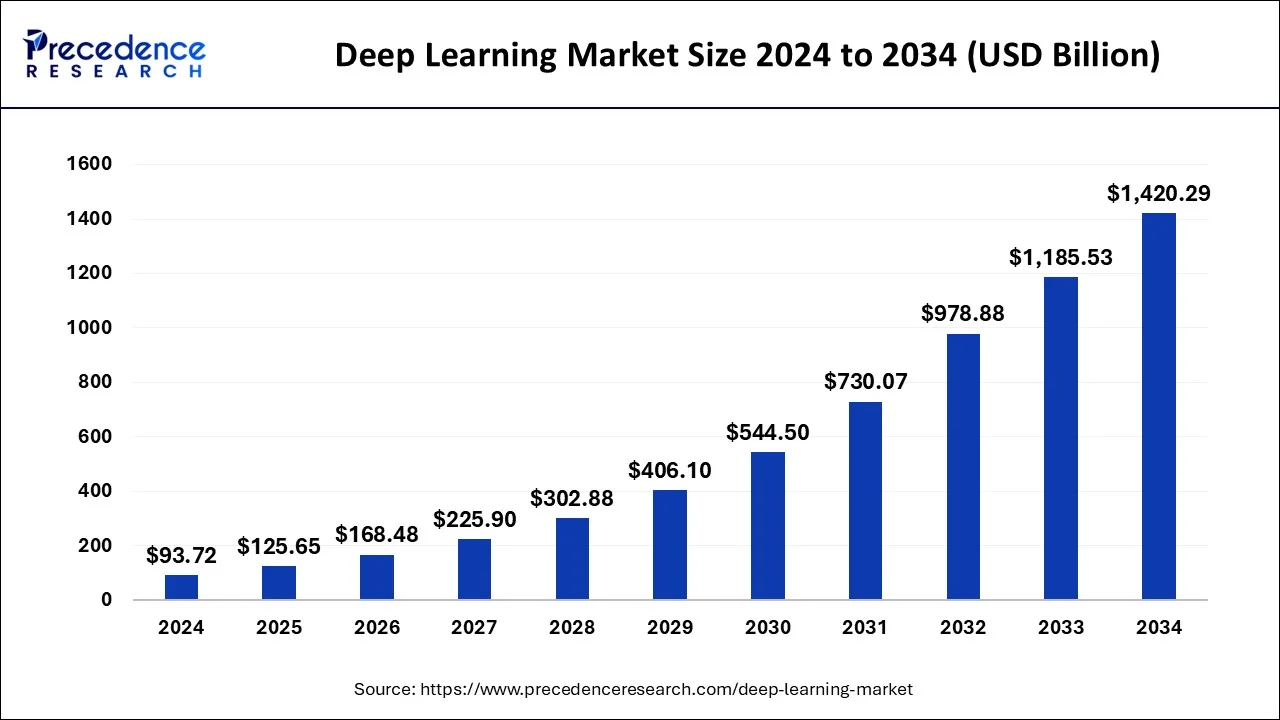

The global deep learning market is expected to reach USD 1420.29 billion by 2034, growing at a CAGR of 31.24% from 2025 to 2034, showing why choosing the right framework matters more than ever.

In this blog, we help you understand deep learning frameworks, compare popular options and choose the best framework based on your project goals and technical requirements.

What are Deep Learning Frameworks?

Deep learning frameworks are software platforms that simplify building, training and deploying neural networks. They provide ready to use libraries, APIs and tools that eliminate the need to code complex algorithms from scratch, helping developers work more efficiently.

Deep learning has brought major progress to AI projects, with over 85% reporting better performance and frameworks play a key role in this success. By handling tasks like model optimization, hardware acceleration and scalability, they enable faster development and more reliable AI applications.

Why Deep Learning Frameworks are Important?

Deep learning frameworks play a critical role in making AI development faster, more efficient and scalable. They reduce technical complexity and allow developers and businesses to focus on innovation rather than low level implementation details. With growing AI adoption, these frameworks have become essential for successful AI projects.

- Faster Development: Prebuilt libraries and reusable components significantly reduce development time and effort.

- Improved Performance: Optimized computations and hardware acceleration help train models faster and more accurately.

- Scalability: Easily scale models from small experiments to enterprise level AI applications.

- Ease of Use: Abstract complex mathematical operations, making deep learning accessible even to beginners.

- Strong Ecosystem Support: Large communities, documentation and integrations speed up troubleshooting and innovation.

10 Most Important Deep Learning Frameworks You Should Know in 2026

This section highlights the leading deep learning frameworks powering modern AI applications, research innovations and scalable machine learning solutions.

1. TensorFlow

TensorFlow is a powerful open source deep learning framework developed by Google, designed to build, train and deploy machine learning frameworks at scale. It supports everything from experimental research to enterprise grade production systems.

With strong support for distributed computing, hardware acceleration and cross platform deployment, TensorFlow remains a top choice for organizations building robust AI solutions.

Its flexible architecture allows developers to deploy models across cloud environments, mobile devices, browsers and edge hardware, making it highly versatile for real world AI applications.

| Attribute | Details |

| Developed by | Google Brain Team |

| Initial Release | November 9, 2015 |

| Stable release | 2.20.0 / August 19, 2025 |

| Written In | Python, C++, CUDA |

| Platform | Linux, macOS, Windows android, JavaScript |

| Type | Machine learning library |

| Repository | github.com/tensorflow/tensorflow |

| License | Apache License 2.0 |

| Official Website | tensorflow.org |

Key Features

- Comprehensive ML Ecosystem: Tools for model building, training, validation, optimization and deployment

- Hardware Acceleration: Optimized for GPUs and TPUs for faster training

- Production Ready Deployment: Supports web, mobile, cloud and edge deployments

- Scalable & Distributed Training: Handles large datasets and multi machine workloads

- Strong Community Support: Extensive documentation and ecosystem backed by Google

Best For

- Large scale deep learning projects

- Enterprise grade AI and ML applications

- Production focused machine learning systems

- Teams requiring scalability and cross platform deployment

Used By

2. PyTorch

PyTorch is an open source deep learning framework developed by Facebook’s AI Research lab (FAIR). It is widely popular for research & production due to its dynamic computation graph; Which allows flexibility and faster experimentation.

PyTorch is ideal for developers looking to build neural networks with intuitive, Pythonic code and seamless integration with other Python libraries.

It is heavily used in both academic research and industry projects, supporting applications like computer vision, natural language processing & reinforcement learning.

| Attribute | Details |

| Developed by | Meta AI [Facebook’s AI Research lab – FAIR] |

| Initial Release | September 2016 |

| Stable release | 2.9.1 / November 12, 2025 |

| Written In | Python, C++, CUDA |

| Platform | IA-32, x86-64, ARM64 |

| Type | Deep learning library |

| Repository | github.com/pytorch/pytorch |

| License | BSD-3 |

| Official Website | pytorch.org |

Key Features

- Dynamic Computation Graphs: Flexibility to modify network architecture on the fly

- Pythonic & Intuitive: Easy to learn and integrate with Python tools

- Strong GPU Acceleration: Optimized for CUDA enabled GPUs for faster training

- Extensive Community & Ecosystem: Large number of prebuilt models, tutorials and libraries

- Seamless Deployment: Supports TorchScript, ONNX and cloud integration with edge devices

Best For

- Research focused AI projects

- Rapid prototyping and experimentation

- Computer vision, NLP and reinforcement learning applications

- Teams needing flexibility and Python integration

Used By

- Facebook / Meta

- Microsoft

- Tesla

- Airbnb

- Uber

3. Keras

Keras is a high level, user friendly deep learning framework designed for fast experimentation. Initially developed as an interface for TensorFlow, it allows developers to build & train neural networks quickly using concise, readable Python code.

Keras is ideal for beginners and researchers who want to prototype models efficiently without worrying about low level implementations.

It supports multiple backend engines, and integrates seamlessly with TensorFlow for production ready deployments Making it both accessible and powerful.

| Attribute | Details |

| Developed by | François Chollet / ONEIROS |

| Initial Release | March 27, 2015 |

| Stable release | 3.13.0 / December 18, 2025 |

| Written In | Python |

| Platform | Cross platform |

| Type | Frontend for TensorFlow, JAX or PyTorch |

| Repository | github.com/keras-team/keras |

| License | Apache License 2.0 |

| Official Website | keras.io |

Key Features

- User Friendly API: Simple and intuitive interface for building neural networks

- Fast Prototyping: Enables rapid experimentation and model iteration

- Multi Backend Support: Works with TensorFlow, Theano and Microsoft CNTK

- Modular & Extensible: Easily customize layers, optimizers and loss functions

- Seamless Integration: Works with TensorFlow for production deployment

Best For

- Beginners and researchers in deep learning

- Rapid prototyping of AI models

- Small to medium scale AI projects

- Education and learning purposes

Used By

4. JAX

JAX is an open source deep learning framework developed by Google, designed for high performance numerical computing. It extends NumPy with automatic differentiation and GPU/TPU acceleration making it ideal for researchers and engineers building cutting edge AI models.

JAX allows fast experimentation with flexible, functional programming paradigms and is gaining popularity in advanced machine learning research.

Its ability to combine composable transformations with hardware acceleration makes it highly suitable for scientific computing and AI research requiring large scale, differentiable programming.

| Attribute | Details |

| Developed by | |

| Initial Release | December 2018 |

| Stable release | v0.8.2 / December 18, 2025 |

| Written In | Python, C++ |

| Platform | Linux, macOS, Windows |

| Type | Numerical computing & deep learning library |

| Repository | github.com/jax-ml/jax |

| License | Apache License 2.0 |

| Official Website | docs.jax.dev/en/latest |

Key Features

- Automatic Differentiation: Simplifies gradient computation for complex models

- Hardware Acceleration: Optimized for GPUs and TPUs for high speed training

- Functional Programming: Supports composable and modular model definitions

- Integration with NumPy: Familiar syntax for scientific computing users

- Highly Flexible: Ideal for research and experimental AI model development

Best For

- Advanced AI research and experimentation

- High performance scientific computing

- Gradient based optimization problems

- Developers seeking flexible, composable deep learning models

Used By

- Google Research

- DeepMind

- OpenAI (experimental projects)

- NVIDIA

- University AI labs

5. Apache MXNet

Apache MXNet is a flexible and efficient open source deep learning framework, originally developed by Apache Software Foundation. It supports both symbolic and imperative programming, enabling developers to prototype quickly while maintaining high performance for production.

MXNet is highly scalable, capable of distributed training across multiple GPUs and machines, making it suitable for large scale AI deployments.

MXNet is particularly popular in cloud based AI solutions due to its seamless AWS integration services.

| Attribute | Details |

| Developed by | Apache Software Foundation |

| Initial Release | November, 2015 |

| Stable release | 1.9.1 / May 10, 2022 |

| Written In | C++, Python, R, Java, Julia, JavaScript, Scala, Go, Perl |

| Platform | Linux, macOS, Windows |

| Type | Library for machine learning and deep learning |

| Repository | github.com/apache/mxnet |

| License | Apache License 2.0 |

| Official Website | mxnet.apache.org |

Key Features

- Hybrid Programming: Supports both symbolic and imperative execution

- Scalability: Efficiently trains models on multiple GPUs and distributed systems

- Flexible APIs: Supports Python, R, Scala, Julia and JavaScript

- Optimized for Cloud: Deep integration with AWS ecosystem for deployment

- Pretrained Models & Gluon API: Simplifies model development and experimentation

Best For

- Cloud based AI applications

- Large scale distributed training

- Developers needing flexibility and performance

- AI projects integrated with AWS infrastructure

Used By

- Amazon

- Microsoft

- Baidu

- Salesforce

- Wolfram Research

6. Caffe

Caffe is a fast, lightweight deep learning framework focused on speed and modularity especially for computer vision tasks. Developed by the Berkeley AI Research lab, it is known for its efficient model execution and suitability for production environments where performance matters.

Caffe uses a configuration based approach, allowing developers to define models without extensive coding, which makes experimentation & deployment faster.

| Attribute | Details |

| Developed by | Berkeley Vision and Learning Center |

| Initial Release | December, 2013 |

| Stable release | 1.0 / April 18, 2017 |

| Written In | C++ |

| Platform | Linux, macOS, Windows |

| Type | Deep learning library |

| Repository | github.com/BVLC/caffe |

| License | BSD |

| Official Website | caffe.berkeleyvision.org |

Key Features

- High Performance: Optimized for speed with GPU and CPU support

- Model Configuration Files: Define networks using simple prototxt files

- Strong Computer Vision Support: Widely used for image classification and recognition

- Pretrained Models: Large model zoo for quick implementation

- Production Friendly: Suitable for deployment focused workflows

Best For

- Computer vision and image processing tasks

- High performance inference systems

- Production level deep learning pipelines

- Projects needing fast model execution

Used By

7. Deeplearning4j (DL4J)

Deeplearning4j is an enterprise grade, open source deep learning framework built Specifically for the Java Virtual Machine (JVM). It is designed to integrate seamlessly with existing Java and Scala-based systems, making it a strong choice for large scale, production focused applications.

DL4J supports distributed training and is optimized for business use cases that require scalability, security and performance in enterprise environments.

| Attribute | Details |

| Developed by | Kondiut K. K. and contributors |

| Initial Release | February, 2014 |

| Stable release | 1.0.0-M2.1 / August 17, 2022 |

| Written In | Java, CUDA, C, C++ |

| Platform | CUDA, x86, ARM, PowerPC |

| Type | Natural language processing, deep learning, machine vision, artificial intelligence |

| Repository | github.com/deeplearning4j/deeplearning4j |

| License | Apache License 2.0 |

| Official Website | deeplearning4j.konduit.ai |

Key Features

- JVM Native Framework: Built for Java and Scala ecosystems

- Distributed Training: Supports Apache Spark and Hadoop

- Enterprise Ready: Designed for large scale, production deployments

- GPU Acceleration: CUDA support for faster model training

- Integration Friendly: Works well with existing enterprise tools

Best For

- Enterprise AI and production systems

- Java and Scala based applications

- Distributed and big data environments

- Scalable deep learning solutions

Used By

8. Hugging Face Transformers

Hugging Face Transformers is a popular open source library focused on natural language processing (NLP). It provides pre-trained transformer models that enable developers to build, fine tune and deploy state of the art AI applications faster with minimal effort.

The framework simplifies working with large language models, and supports multiple deep learning backends, making it widely adopted for modern AI use cases.

| Attribute | Details |

| Developed by | Hugging Face |

| Initial Release | November, 2018 |

| Stable release | v5.0.0 / December 1, 2025 |

| Written In | Python |

| Platform | Windows, Linux, MacOS |

| Type | Open source Python library |

| Repository | github.com/huggingface/transformers |

| License | Apache License 2.0 |

| Official Website | huggingface.co/docs/transformers |

Key Features

- Pre trained Transformer Models: Access to BERT, GPT, T5, RoBERTa and more

- Multi Framework Support: Works with PyTorch, TensorFlow and JAX

- Easy Fine Tuning: Simple APIs for training custom models

- Strong NLP Focus: Optimized for text, language and conversational AI

- Active Community: Rapid updates and strong ecosystem support

Best For

- Natural language processing tasks

- Large language model development

- Chatbots and conversational AI

- Text classification, translation and summarization

Used By

- Amazon

- Meta

- Microsoft

- Intel

9. Microsoft Cognitive Toolkit (CNTK)

Microsoft Cognitive Toolkit is a deep learning framework developed by Microsoft for building high performance neural networks. It is known for its speed, scalability and efficient handling of large datasets, making it suitable for enterprise grade AI applications.

CNTK is particularly strong in speech recognition, computer vision & natural language processing, with optimized performance on distributed and GPU based systems.

| Attribute | Details |

| Developed by | Microsoft Research |

| Initial Release | January 25, 2016 |

| Stable release | 2.7.0 / April 26, 2019 |

| Written In | C++ |

| Platform | Windows, Linux |

| Type | Library for machine learning and deep learning |

| Repository | github.com/microsoft/CNTK |

| License | MIT License |

| Official Website | https://www.microsoft.com/en-us/research/publication/introduction-microsoft-cntk-v2-0-library-2/ |

Key Features

- High Performance: Optimized computation with efficient resource utilization

- Distributed Training: Scales across multiple GPUs and machines

- Flexible Model Design: Supports feedforward, CNNs and RNNs

- Enterprise Focus: Designed for production level AI workloads

- Cross Platform Support: Works across major operating systems

Best For

- Enterprise scale AI applications

- Speech and language processing systems

- High performance model training

- Distributed deep learning workloads

Used By

- Microsoft

- Bing

- Skype

- Azure AI services

- Delta Air Lines

10. Theano

Theano is one of the earliest deep learning frameworks that played a foundational role in the development of modern AI libraries. It allows developers to define, optimize and evaluate mathematical expressions involving multi dimensional arrays efficiently.

Although no longer actively maintained, Theano remains influential, as many popular frameworks like TensorFlow and PyTorch were inspired by its design principles.

| Attribute | Details |

| Developed by | PyMC Development Team |

| Initial Release | February, 2007 |

| Stable release | 1.0.5 / July 27, 2020 |

| Written In | Python, CUDA |

| Platform | Windows, Linux, MacOS |

| Type | Machine learning library |

| Repository | github.com/Theano/Theano |

| License | The 3-Clause BSD License |

| Official Website | https://pypi.org/project/Theano/ |

Key Features

- Symbolic Computation: Defines mathematical expressions symbolically

- Automatic Differentiation: Efficient gradient calculations for deep learning

- GPU Acceleration: Supports CUDA for faster numerical computation

- Optimization Engine: Improves execution speed automatically

- Foundational Framework: Influenced modern deep learning libraries

Best For

- Academic research and learning

- Understanding deep learning fundamentals

- Mathematical and numerical computation

- Legacy deep learning projects

Used By

- OpenAI

- JPMorgan Chase

Quick Comparison of Top Deep Learning Frameworks

The table below compares the most important deep learning frameworks based on popularity, best use cases and learning difficulty, helping you choose the right tool faster.

| No. | Framework | GitHub Stars* | Best Use Case | Difficulty |

| 1 | TensorFlow | 193k ⭐ | Production ready AI, large scale systems | Medium |

| 2 | PyTorch | 96.1k ⭐ | Research, experimentation, dynamic models | Easy–Medium |

| 3 | Keras | 63.7k ⭐ | Rapid prototyping, beginner friendly projects | Easy |

| 4 | JAX | 34.4k ⭐ | High performance research, numerical computing | Hard |

| 5 | Apache MXNet | 20.8k ⭐ | Scalable and distributed deep learning | Medium |

| 6 | Caffe | 34.8 k⭐ | Computer vision and image processing | Medium |

| 7 | Deeplearning4j | 14.2k ⭐ | Enterprise Java based AI applications | Hard |

| 8 | Hugging Face Transformers | 154k ⭐ | NLP, large language models, text based AI | Easy–Medium |

| 9 | Microsoft CNTK | 17.6k ⭐ | High performance enterprise AI solutions | Hard |

| 10 | Theano | 10k ⭐ | Academic research and learning fundamentals | Hard |

*GitHub stars are approximate and may change over time.

How to Choose the Right Deep Learning Framework for Your Project

Choosing the right deep learning framework depends on your project goals, team expertise, scalability needs and deployment requirements. No single framework fits every use case, so it’s important to evaluate a few key factors before deciding.

1. Define Your Project Requirements

Start by identifying what you want to build like computer vision, NLP, recommendation systems or research prototypes. Some frameworks excel in specific domains, such as Hugging Face for NLP or Caffe for image processing.

2. Consider Your Team’s Skill Level

If your team is new to deep learning, beginner friendly frameworks like Keras or PyTorch can reduce the learning curve. Advanced teams may prefer TensorFlow or JAX for greater control and performance.

3. Evaluate Scalability and Performance

For large scale or production ready applications, choose frameworks that support distributed training and efficient deployment, such as TensorFlow, Apache MXNet or Deeplearning4j.

4. Check Ecosystem and Community Support

A strong ecosystem means better documentation, frequent updates and community help. Frameworks like TensorFlow, PyTorch and Hugging Face benefit from active developer communities and long term support.

5. Deployment and Platform Compatibility

Ensure the framework supports your target platforms like cloud, mobile, web or edge devices. TensorFlow stands out for multi platform deployment, while PyTorch is preferred for rapid experimentation.

Frequently Asked Questions

A deep learning framework is a software library that simplifies building, training and deploying neural networks for AI applications.

Keras and PyTorch are best for beginners due to their simple APIs, flexibility and strong community support.

TensorFlow and PyTorch are the most popular frameworks, widely used in both research and production environments.

Hugging Face Transformers is ideal for NLP tasks, offering pre-trained models for text, translation and conversational AI.

Yes, many projects combine frameworks, such as using Hugging Face with PyTorch or TensorFlow for training and deployment.

Most deep learning frameworks are open source and free, though infrastructure and cloud resources may add costs.