Node JS is a popular asynchronous event-driven JavaScript runtime environment and is majorly used to create back-end and front-end frameworks. It has received over 8.3 K votes on StackShare and has been steadily gaining widespread notoriety since its introduction in 2009. There are also 85% of respondents that advocate the usage of Node JS for the creation of web applications.

Ryan Dahl, its creator, designed Node JS in a manner that helps in the creation of scalable network applications! Its users experience the added advantage of not worrying about the deadlocking process as there are no locks present. All the functions in the Node JS have no blocks by performing I/O directly.

Node.js possess potential functionalities that helps in driving the development process smoothly and provide with reliable end solutions. Now-a-days there is a large pool of Indian developers for hire who are Node.JS experts and can help you build the best solution as per your requirements.

It would be best to keep in mind that the code must be maintainable and of high quality. You can use several approaches to pull that off, but one of the best ways is by utilizing the test-driven NodeJS development technique!

Building API’s for Node.js and Express using TDD

Node.js, along with its framework Express, have become in demand because of the REST API development. They are capable of constructing a RESTful web page for serving both API, and the HTML content.

The main step involved in the construction of an express project is the testing of APIs created and ensuring the output is up to your expectations.

Initial Steps-

You must start by downloading Node.js onto your system unless you already have it installed, also install the Git version control system. The Express application created in this article will provide an API for subtraction and addition. Now, run the command you see below in a terminal window for downloading this example application-

git clone https://github.com/cianclarke/tdd-for-apis.git ; cd tdd-for-apis # Clone the finished repository

git checkout boilerplate # jump to the initial project code

Once this is done, the installation of testing frameworks as dev-dependencies will take place. This means that they aren’t installed when npm install will be run in the production environment. They will be required when the development is initiated. So, run the command you see below to set up the testing framework.

npm install --save-dev supertest

npm install --save-dev mocha

After this set up for the testing, the framework is complete, and it is time to create the first test. The test will be an integration test for the “addition” route. Each of these Express.js “routes” defines the application (URI) and its response to the client requests.

Creating Integration tests-

They are integrated into the application verifying the API footprints it exposes. Integrated testing is a type of testing in which the software modules can integrate locally and be tested as a group. In Node JS Test- Driven Development, it will serve the purpose of exposing any defects present when interactions happen between software modules at the time of their integration.

Integration tests in case of this application will be used for testing functionality that will be implemented by the routes. The API in itself can return the appropriate status codes as well as bodies for all scenarios that may arise.

For this, a directory must be created for storing the tests in as well as a blank file that contains the first test. You must make sure that you are presently in the project root directory, follow and run the commands you see below.

mkdir -p test/integration

touch test/integration/test-route-addition.js

The new files are given two different prefixes in the file you see above. Here, one is used to mark that it’s a test, and the other marks a testing route.

Now the initiation of integration test for defining the behaviour of an endpoint and API will take place. The route gets mounded at /add and takes 2 query string params named a and b. If the parameters are present, then you receive the 200 (OK) status code. But if the values are missing or set to ‘non-integer’ values, then a 422 unprocessable entity status code is received when the API is called.

Your test will look something like below-

var supertest = require('supertest'),

app = require('../../app');

exports.addition_should_accept_numbers = function(done){

supertest(app)

.get(‘/add?a=1&b=1’)

.expect(200)

.end(done);

};

exports.addition_should_reject_strings = function(done){

supertest(app)

.get(‘/add?a=string&b=2’)

.expect(422)

.end(done);

Another test can be added to verify the subtraction route and serve up an API as expected. It runs in parallel with the additional tests, which makes sure the time is saved when it’s time to run the full suite. Follow the command you see below for creating a new file for the ‘subtraction’ test case and then run it-

touch test/integration/test-route-subtraction.js

The test being conducted will be focused on verifying the response body carrying a particular property. It is required for verifying if the API works as predicted since the 200 (OK) status code will not ensure if the API does its job as expected! The new test created will look something like what you see below-

var supertest = require('supertest'),

assert = require('assert'),

app = require('../../app');

exports.addition_should_respond_with_a_numeric_result = function(done){

supertest(app)

.get(‘/subtract?a=5&b=4’)

.expect(200)

.end(function(err, response){

assert.ok(!err);

assert.ok(typeof response.body.result === ‘number’);

return done();

});

};

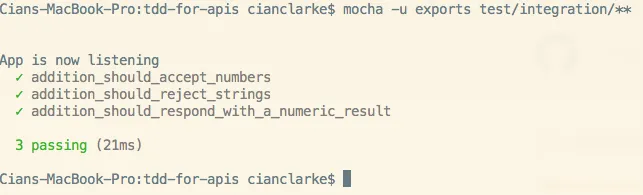

Running Integration tests

The stubs for /add and /subtract routes have been created and mounted in the app.js file. All the files that define the routes can be found in /routes directory and also on the demo repository in GitHub. Keep in mind the routes will only return the 200 (OK) status code.

The presence of stubs will permit the test runner to crash with elegance and thus providing a starting point for the implementation of functionality- so we will be creating a failing test and then move on to its implementation.

To perform the test, follow the command you see below-

node_modules/.bin/mocha -u exports test/integration/*

You can see all the changes for this step, including what npm does when we run `install –save-dev` on GitHub.

Make the integration tests pass

It is now time to execute the Node.js TDD test! For that, the implementation of created routed /addition and /subtraction must be done. Keep in mind that only numbers will be accepted, and a numeric result is given back. The concerns must remain separate from the programming practices; thus, we will have to create empty libraries.

These libraries are responsible for handling mathematical operations. Create a lib directory that contains two files add.js and the substract.js; these both possess export functions that can give back 0 involves no tests for checking result accuracy.

Now run the commands you see below.

mkdir lib

echo "module.exports = function(){ return 0; };" > lib/add.js

echo "module.exports = function(){ return 0; };" > lib/subtract.js

You don’t have to set up the route handlers to make use of add and subtract functions. All that is required to do is add route in route/add.js, it will look something like what you see below.

var add = require('../lib/add');

module.exports = function(req, res, next){

return res.json({ result : add(req.a, req.b) });

};

Before proceeding further, ensure you have appropriately updated the routes/subtract.js.

It was previously mentioned that only numbers would be appropriate for these functions. However, a repetition of this overly used logic mustn’t be repeated on both route handlers. So, add on input checks prior to running the functions. They will get added to the app.js file and generate different middleware directories along with lib and routes.

You have to update the app.js and put in the new middleware, as you see below to it.

app.use(function(req, res, next){

var a = parseInt(req.query.a),

b = parseInt(req.query.b);

if (!a || !b || isNaN(a) || isNaN(b)){

return res.status(422).end("You must specify two numbers as query params, A and B");

}

req.a = a;

req.b = b;

return next();

});

Let’s try to run an integration test, and the pass will look fine. Your output gained shall look like the code below-

For checking out more of this code, click here- https://rb.gy/si7rco

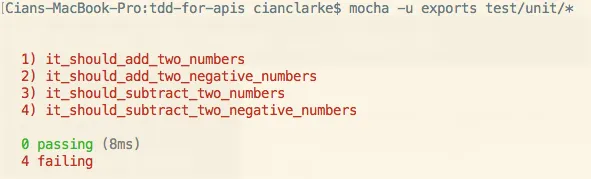

Adding the Unit Tests

Since the integration of JavaScript Test-Driven Development tests performed for the API has been conducted, we can add Unit tests! These are used for verifying the failure of addition and subtraction logic. This isn’t a strange phenomenon as TDD can implement tests, write the failing tests, and observe passing tests.

Let’s start by creating a site for the tests to live in and then proceeding to produce blank files for the addition and subtraction tests. Run the commands you see below for creation of a new test file.

mkdir test/unit

touch test/unit/test-lib-addition.js ; touch test/unit/test-lib-subtraction.js;

Update the test-lib-addition.js, and your test will look somewhat like what you see below.

var assert = require('assert'),

add = require('../../lib/add');

exports.it_should_add_two_numbers = function(done){

var result = add(1,1);

assert.ok(result === 2);

return done();

};

exports.it_should_add_two_negative_numbers = function(done){

var result = add(-2,-2);

assert.ok(result === -4);

return done();

};

Once you run the unit test, you see that they fail as discussed, and the library gives you the number zero. They are telling you that you are on the correct path!

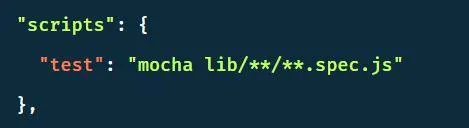

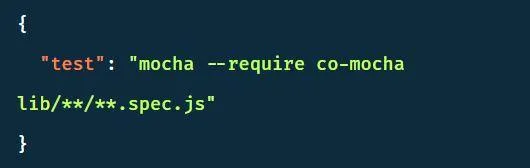

Telling users to run tests

Node JS applications possess substantial convention to run tests by running the npm test. The job of the conventions is to make sure the project becomes straightforward and effortless to understand. So, in this step, we learn how to add an entry to the package.json file, follow the commands you see below-

"scripts" : {

"test" : "node_modules/.bin/mocha -u exports test/**/*"

}

Now you will be easily able to run tests with npm test instead of using a long command. The tests are specified in the test/**/* directory. This means that the file within the subdirectory of test runs as a Mocha test. Initiate the running of the npm test, and the output displays the results of running seven tests!

Test-Driven development approach using Node JS.

These steps below tell you how the JavaScript TDD approach can help you write failing tests and codes to satisfy the tests and a refractor in Node JS. The steps also help writing a new module as well as creating testing tools and principles!

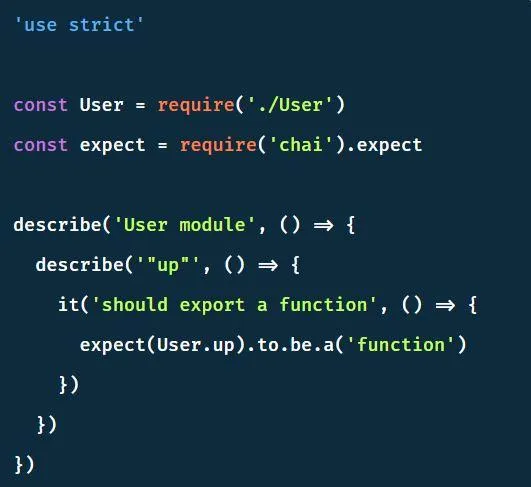

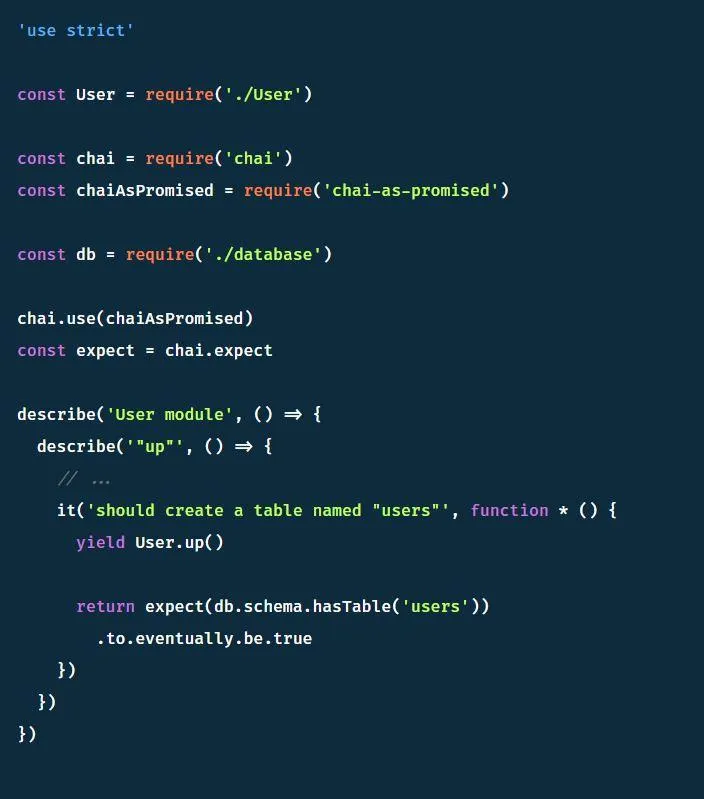

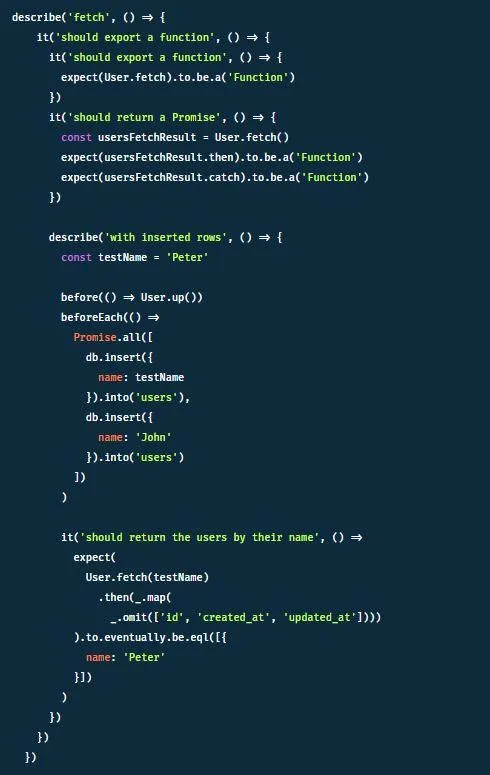

Creation of new modules- The module we create will oversee the creation and fetching of users from the database, postgresql. We will use Knex will for this, but first, we must create a new module.

Now we need to install all necessary testing tools.

Add the lines you see below to the package json

Creating the first test file-

It is time to create the first test file now. Following the commands below will provide you clarity.

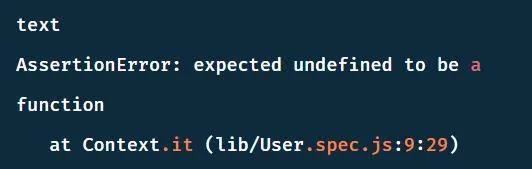

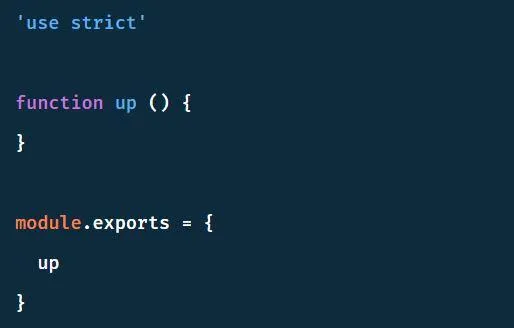

The creation of the function named ‘up’ summarizes the making of the table. Follow the commands below to run the tests-

Here’s how to fix the very first of the failing tests-

For the present requirements, the above code is satisfactory. There is hardly any code for refactoring, so let us get to writing the next test. Here the up function should run asynchronously.

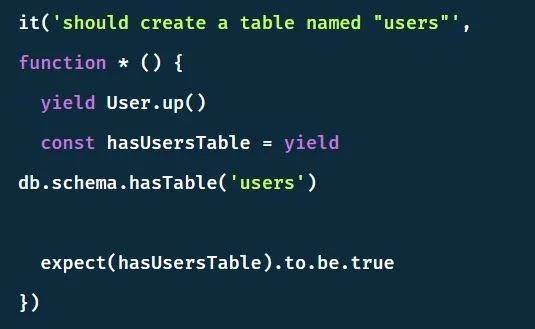

Node.js test case creation

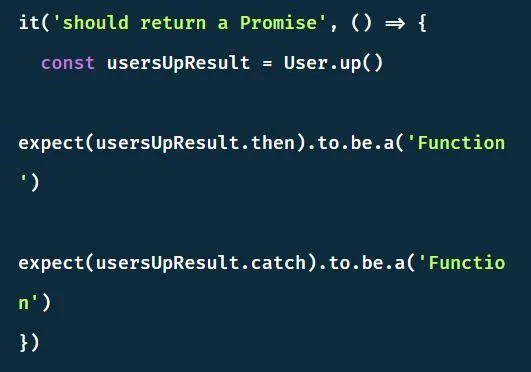

The up function must return a promise, and creating the test below will help you achieve that-

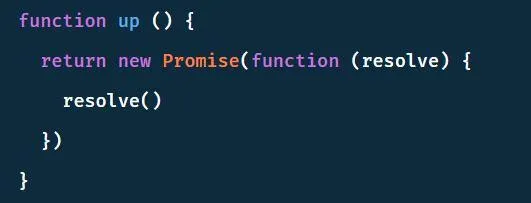

Since it is bound to fail again, a Promise in return can fix that!

Keep in mind that it is always best to take smaller steps toward writing the tests. We have to write the codes accordingly, and this will make sure the documentation goes smoothly. This documentation would work wonders if the API were to change in the future randomly. If the up function changes, you can use callbacks instead of promises to ensure the test fails.

If you need any support with Test-driven development, opt for Node.js Development Company possessing adept developers to help you build potent solutions as per your desired expectations.

Advanced testing in Node.js

Initial steps

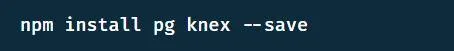

Now it is time to create tables with the help of knex so ensure you have it installed!

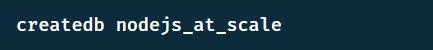

You have to use the command inside the terminal to create a database named nodejs_at_scale.

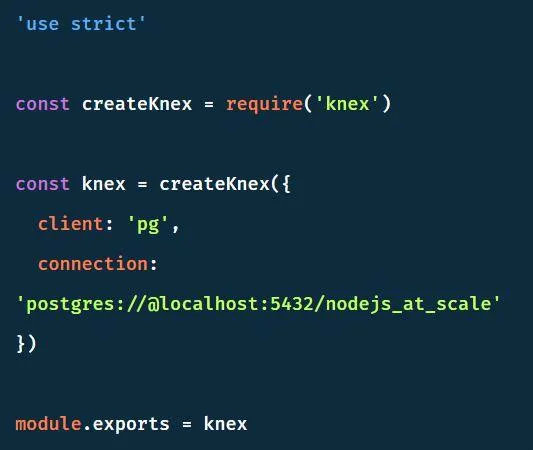

Now a database.js file must be created to ensure connection to the database in one place.

The actual implementations

It is now time to focus on the refactoring stage. Since it may end up being a tad bit difficult, we will be applying a co module. Therefore, we will be adding syntactic sugar to the code.

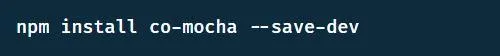

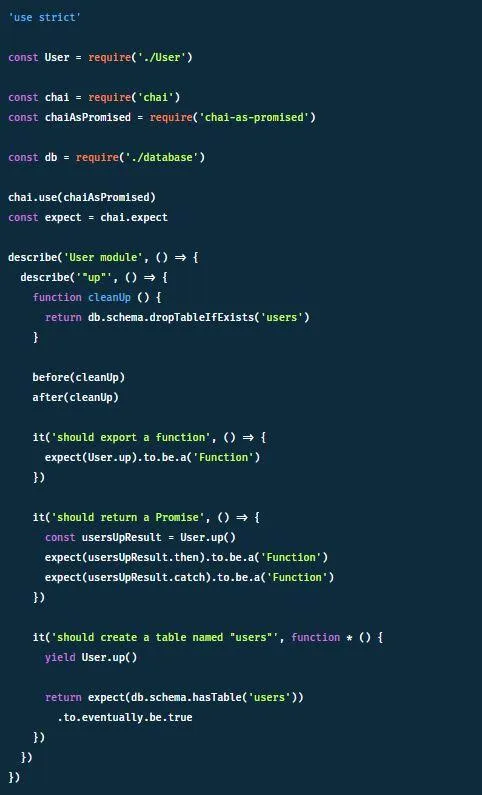

Now let’s add the new module to the old test script.

We can begin refactoring as everything is in order.

The co-mocha permits developers to write down it blocks as generator functions and yield keywords to suspend at Promises. Adding a chai-as-promised module to this will ensure an uncluttered approach.

The standard components of chai are extended with expectations about promises and db.schema.hasTable(‘users’) returns a promise. Refactor this to what you see below.

In this example, the keyword yield is used for extracting the resolved value from the promise. You may return the promise, but only at the end of the function and action will be performed by mocha! The patterns can be utilized within the codebase to make the tests cleaner.

Now we have to clean up before as well as after the tests before and after blocks.

These commands will be perfect for the ‘up’ function, and we must carry on with the creation of the fetching function for a user model.

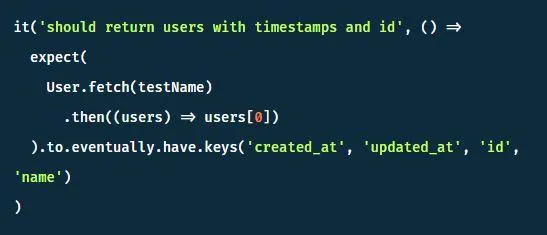

You can now begin the implementation phase as expecting has been exported and dealt with the returned types. Since we are dealing with testing modules with the database, we can create an additional describe block for functions that require the insertion of test data. Now within this block, create a before Each block so you can insert data before every test.

It would help if you also constructed a before block to create the table before testing.

Lodash has been used to drop the fields added by the database dynamically and are difficult to subject to an examination. Promises can also extract the initial value required to inspect its keys. Follow the code below to understand how.

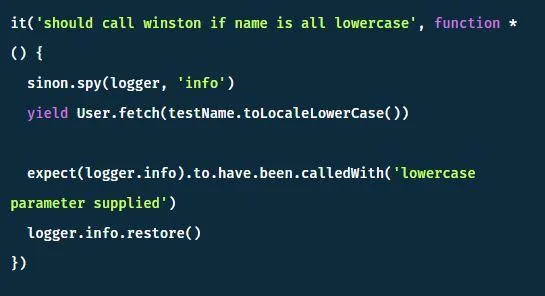

Testing internal functions

This step will involve the testing of the internals of the functions. When tests are written, only the functionality of present functions is tested, and achieving this means ignoring the external functions calls. The utility functions supplied by the sinon module can help solve this issue.

The module will also achieve three other things, they are-

Stubbing: In it, the stubbed function will not be called but supplies an implementation in its place. If one is not supplied, it simply gets called a function () {} empty function.

Spying: Calling a function spy coupled with its earliest implementation will take place, but you can make easy assertions regarding it.

Mocking: It functions similar to those of stubbing but for objects and not just the functions.

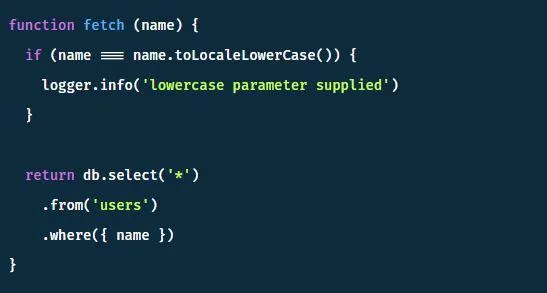

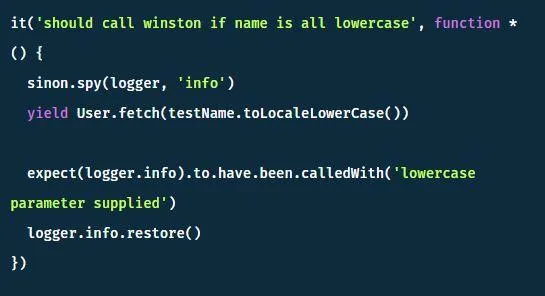

Let’s introduce a logger module within the codebase winston to understand the usage of spies.

Follow the codebase below to make it pass-

Now we must check the output since the tests have passed!

Here the logger was called and verified through the tests and was also detected in the test output. However, it is always best to ensure the tests aren’t cluttered with the text, so you must clean it. You can perform that by replacing the spy with a stub. Keep in mind that a stub doesn’t call for the function, and you must apply to them.

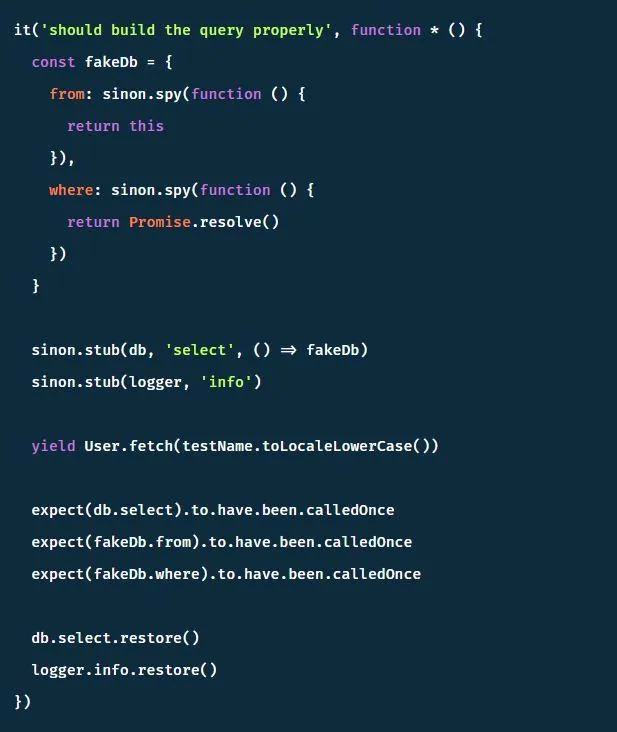

If the function for calling the database isn’t necessary, a similar paradigm could be applied. It will allow the stubbing of functions on db object one by one just like what you see below-

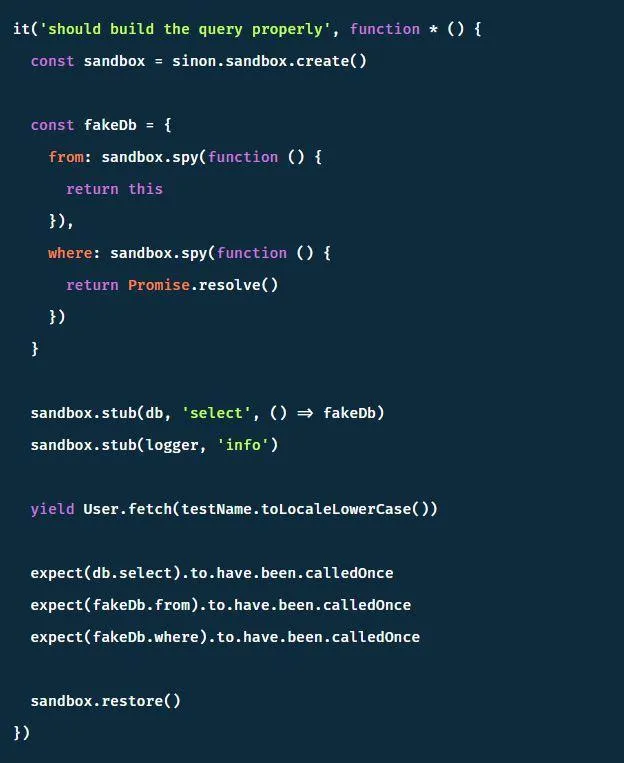

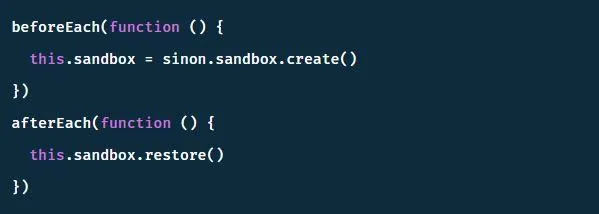

Working on all stubs one by one can be an uphill task, and an easy fix by the name sandboxing solves it. Sinon provides the sandbox. It defines the sandbox at the start of a test and subsequently restores all stubs and spies on the sandbox once you are done. Look at the code below to find out how.

You can also move the sandbox creation into a beforeEach block.

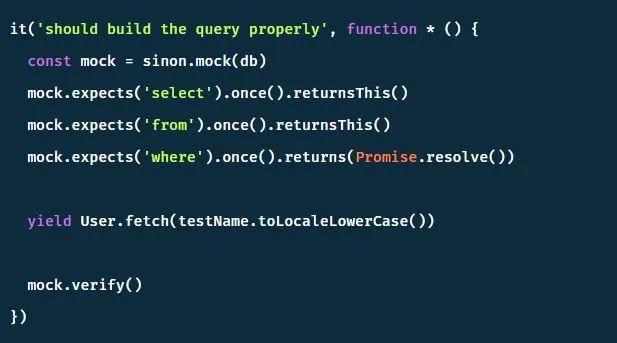

You have to involve the refactor taking these tests to use mock instead of stubbing every single property on the mock object. This step will ensure the intentions are more transparent and the code compact. You will have to use the returnThis method to imitate this chaining function call behavior within the tests.

Preparing for failures

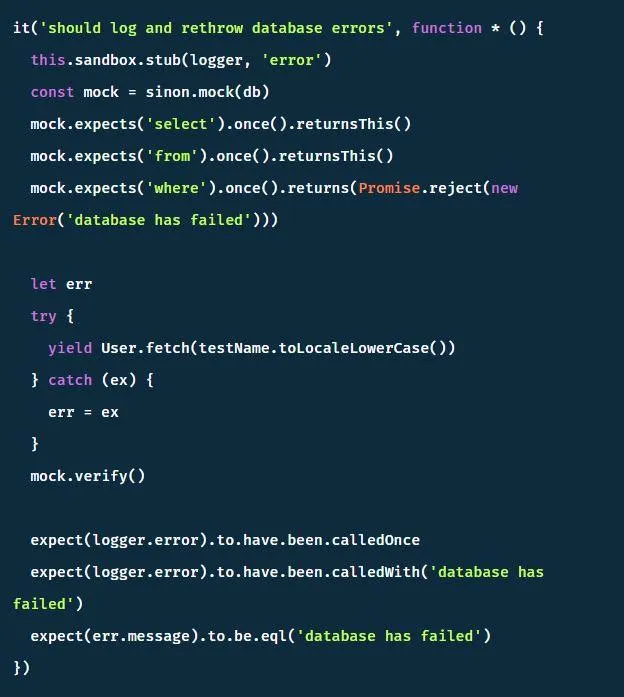

It is always best to be fully prepared for failures as things may not work out according to what you had planned. In our case, the database may crash but adding knex will toss an error. One of the functions has been stubbed and expecting it to throw in the coding below. It tells you how to mimic this behavior.

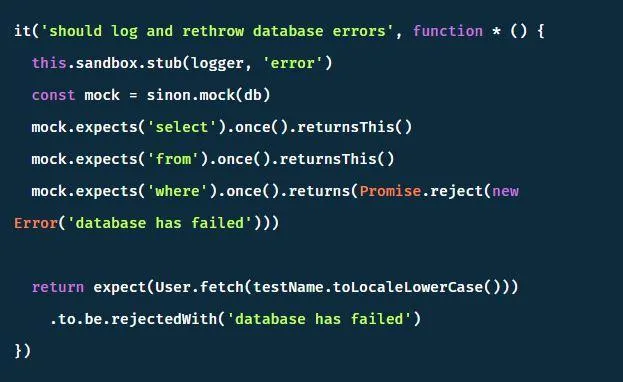

All errors that appear in the application can be tested. It will come in handy when the possibility of an avoid try-catch block arises. You can rewrite it with a functional approach as below-

Mocha for TDD in building Node/Express API

Prerequisites

You must possess the knowledge of constructing APIs in Express. Apart from this, you must have the following installed on your system before getting started-

1. Node/NPM

2. MongoDB installed and running

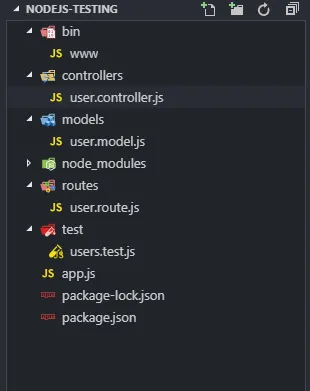

The Project Structure

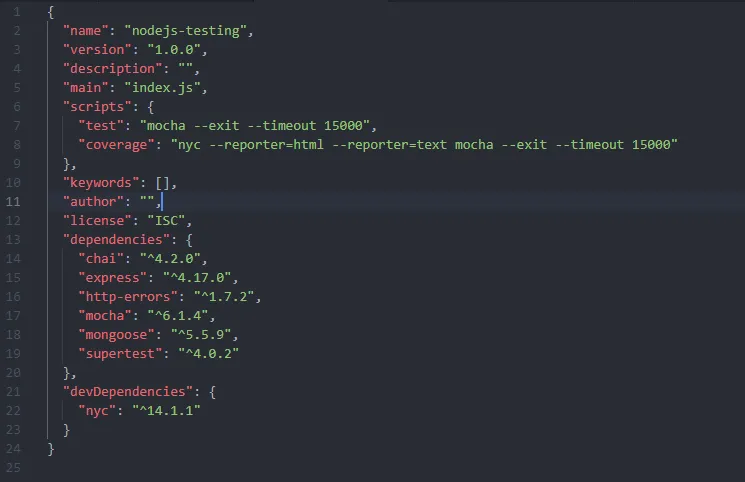

We shall create a REST API for handling the CRUD operations on the users. To do so, you will have to make a new folder by the name nodejs-testing, so open the folder and run the following-

npm init --yes

npm i express mocha chai supertest nyc mongoose

Now initialize the new npm project accompanied by default parameters, then add the essential libraries to it.

1. Mocha/Chai to write tests

2. Supertest to mock requests

3. Nyc for reports on test coverage

4. Mongoose for creation of MongoDB interactions and models

The project structure you create will look something like what you see below, so let’s generate a bare minimum express application.

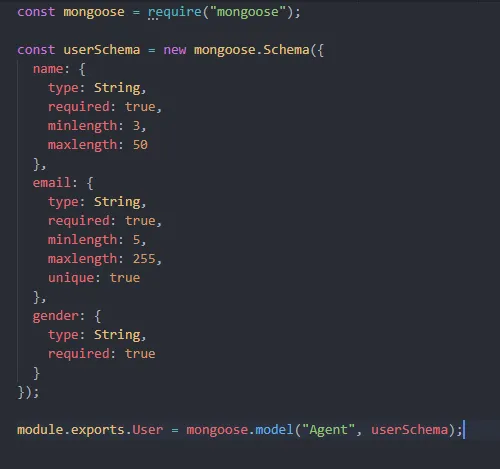

The user.model.js

Construct a new schema for defining a new user schema. Here we will be using only three properties for the user.

App.js

Here we will write the logic in the app.js, and after that, you must connect the MongoDB database. Utilize the usersRouter (which is empty at the moment, it is fine) to create errors at endpoints that are currently not accessible. Now we must export the app to perform tests with it, but without listening to any ports.

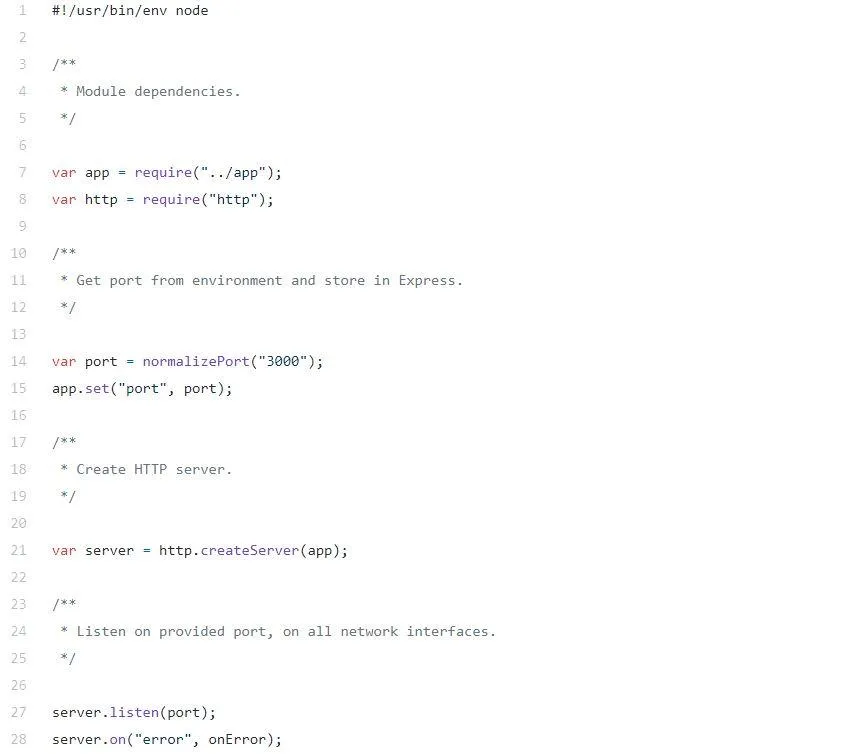

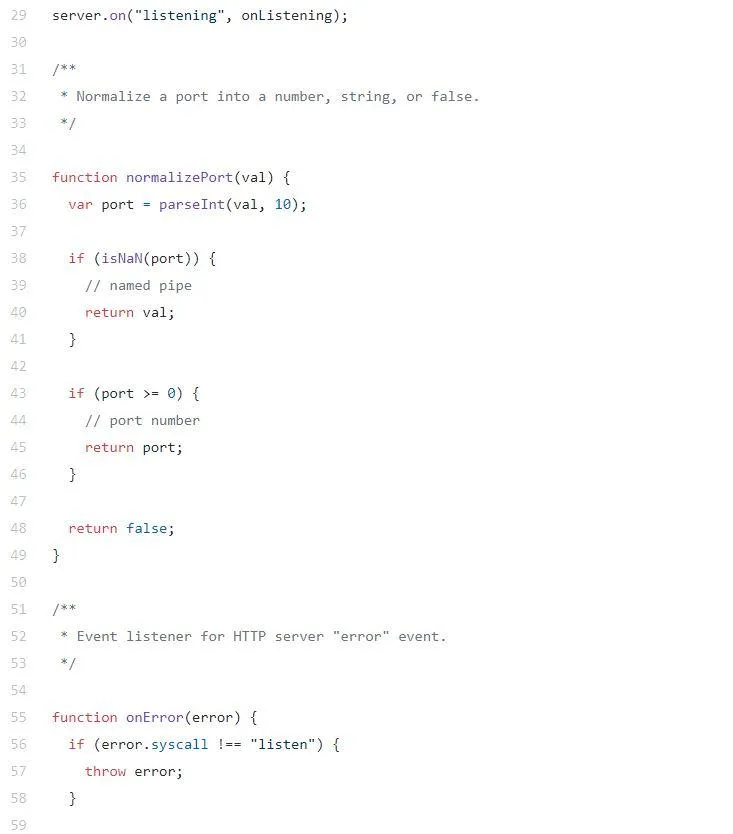

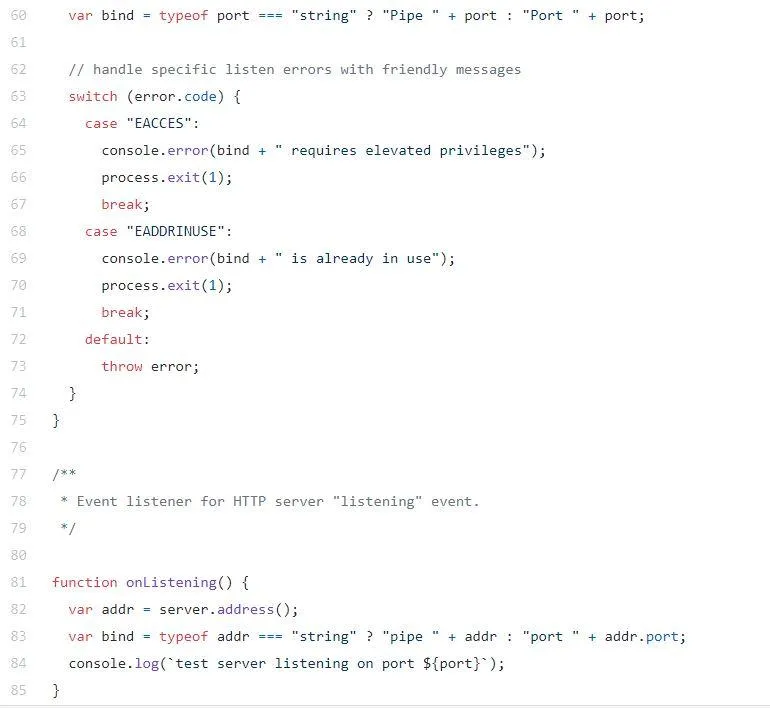

The real startup happens in the bin/www./bin/www

It is now time for constructing an HTTP server for listening in on incoming requests in the development/production mode. We can run the app since we have our basic skeleton.

node bin/www

test server listening on port 3000

Connected to MongoDB at mongodb://localhost/tddDB…

The server is listening on port 3000, and when you try requesting a resource from localhost:3000 you get the message {“message”: “Not Found”}.

Writing Tests

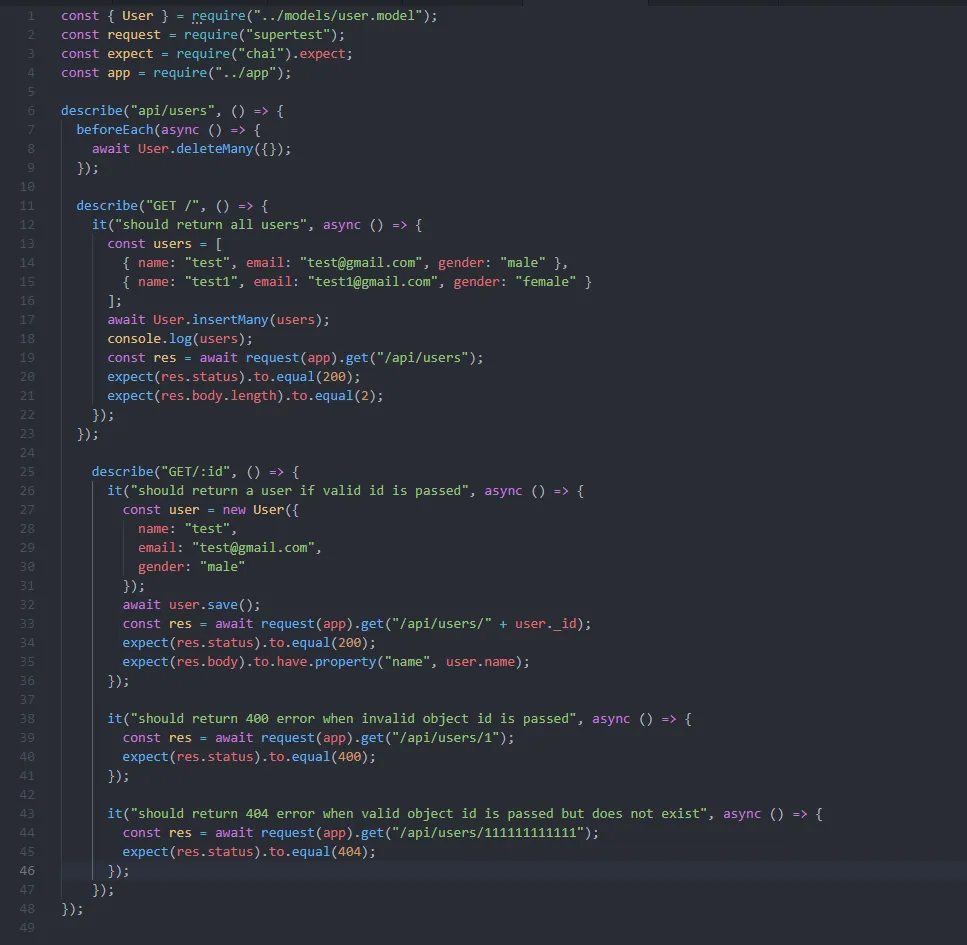

The user.controller and user.route were left empty for demonstrating how the TDD approach is utilized for writing application logic. So, let’s write their tests, then run them and subsequently let them fail. But logic will be added to ensure it passes, repeat the process until the test passes.

Before jumping into writing the tests, you must make sure the test possesses correct setups and teardowns. These are important, so every test starts fresh for making them more reproducible and predictable.

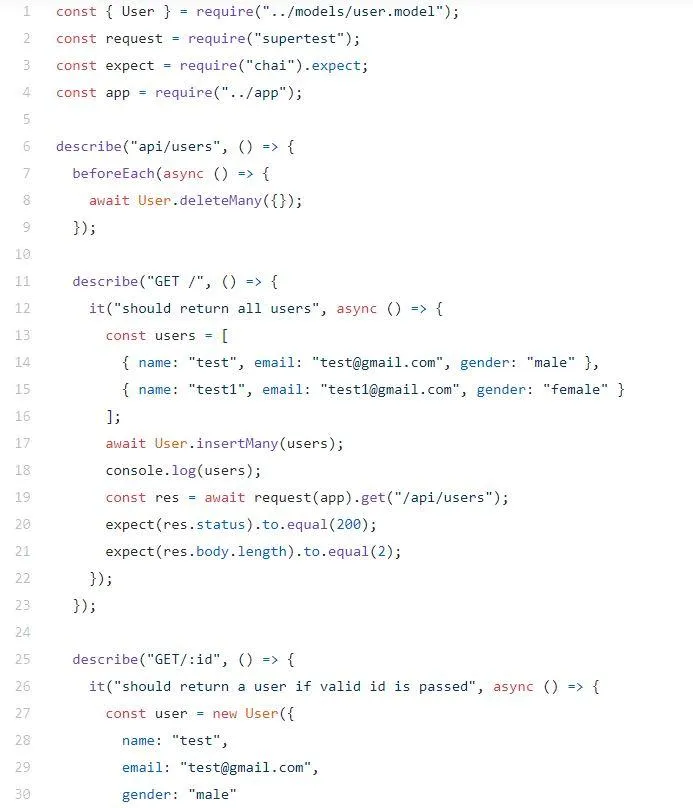

The integration test here will be testing the api/users endpoint by using a describe group. It will group as well as add structure to the tests. We will utilize it for writing individual tests as well.

The primary grouping describe (‘api/users”) comes first, so we have to clear out the user’s collection in the MongoDB before each test. In the describe (“GET /”) block, insert users into the database, and expect results.

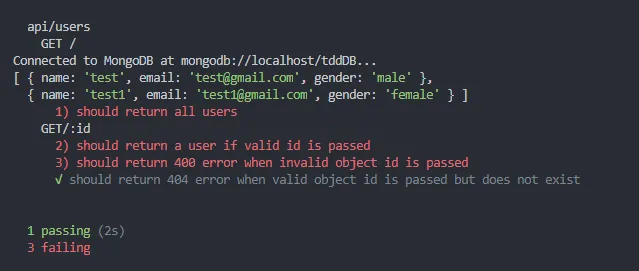

Running Tests

It is comparatively more straightforward, and you must follow-

mocha --timeout 10000 --exit

Now we must increase our default timeout parameter to 10 seconds to ensure the database operations possess adequate time for execution.

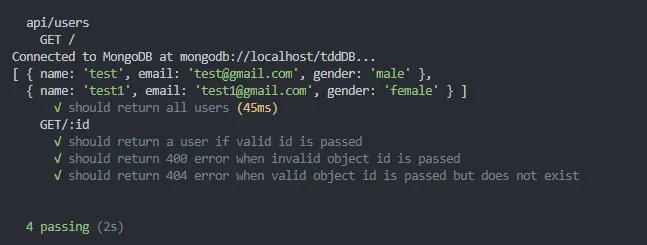

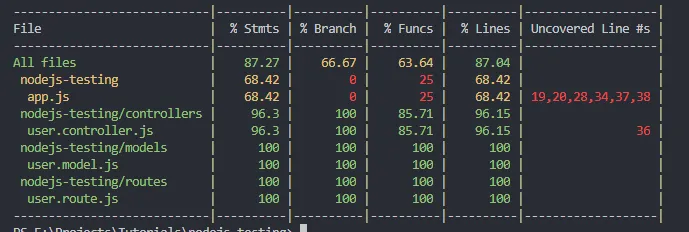

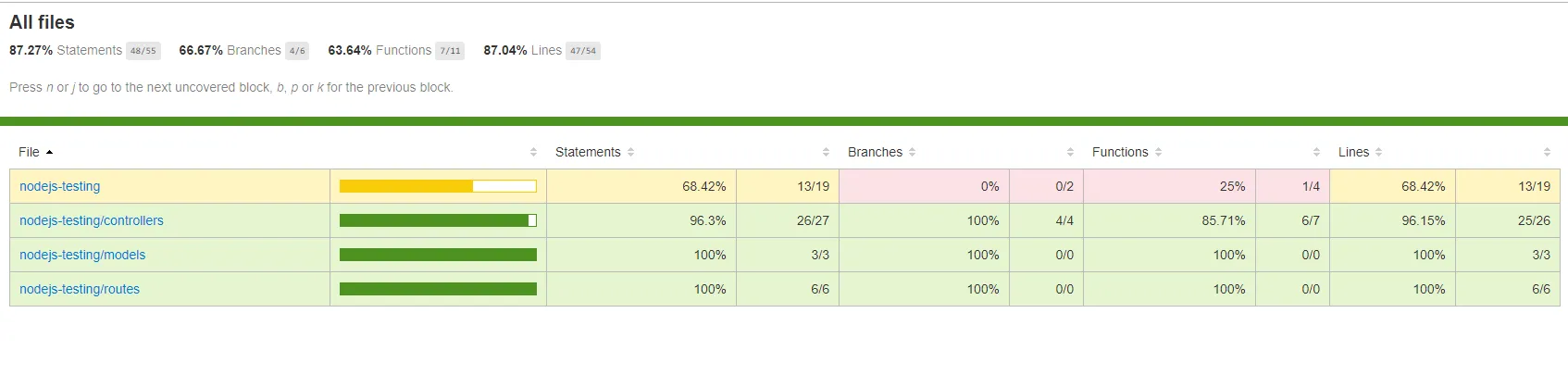

You will obtain an output similar to the one above. The describe and it statements are visible, and you will also notice where the tests pass and fail with the structure.

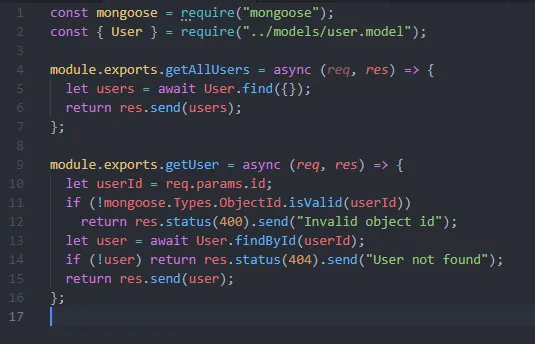

Adding logic to Pass the test

Achieved test results are as predicted, and it is time to add logic to pass them.

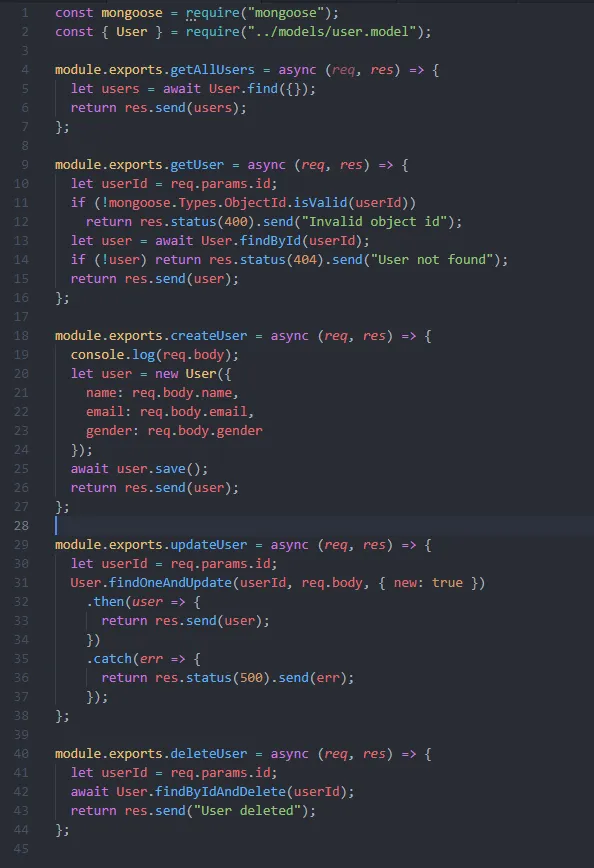

User.controller.js

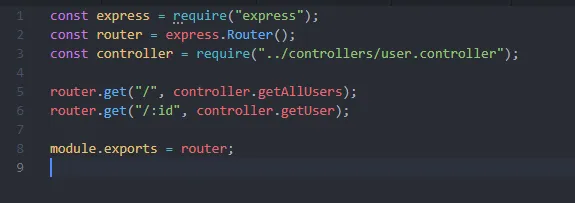

User.route.js

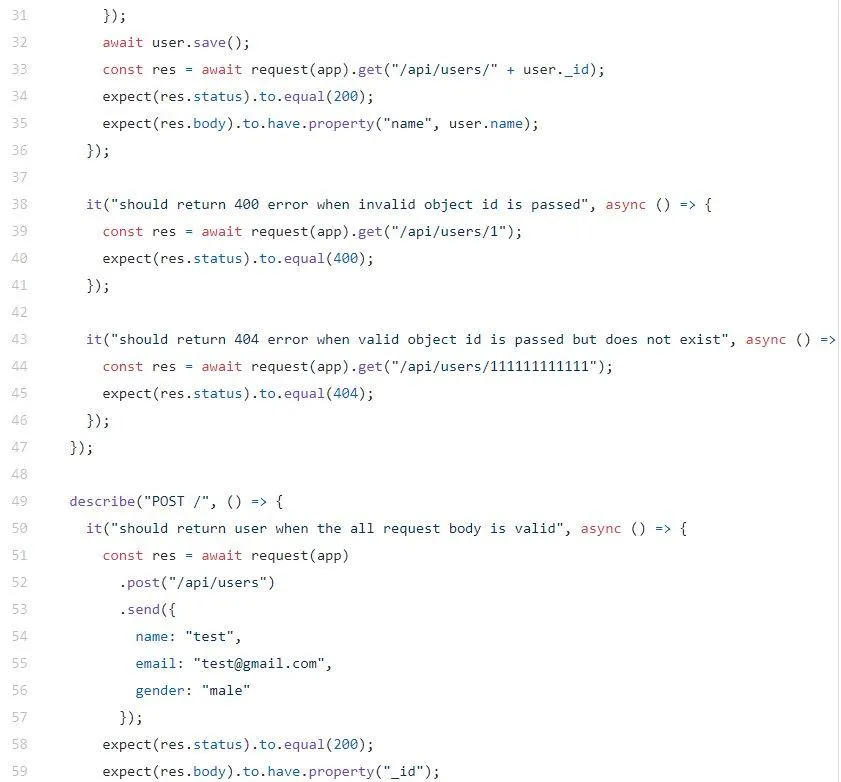

Now add codes to routes and controllers, similar to what you see above. You can apply two methods for the controllers: all users and the specific user. Check whether the object id passed is valid to send the appropriate responses for defining the tests’ behavior.

An output similar to what you see below attained when the test is run-

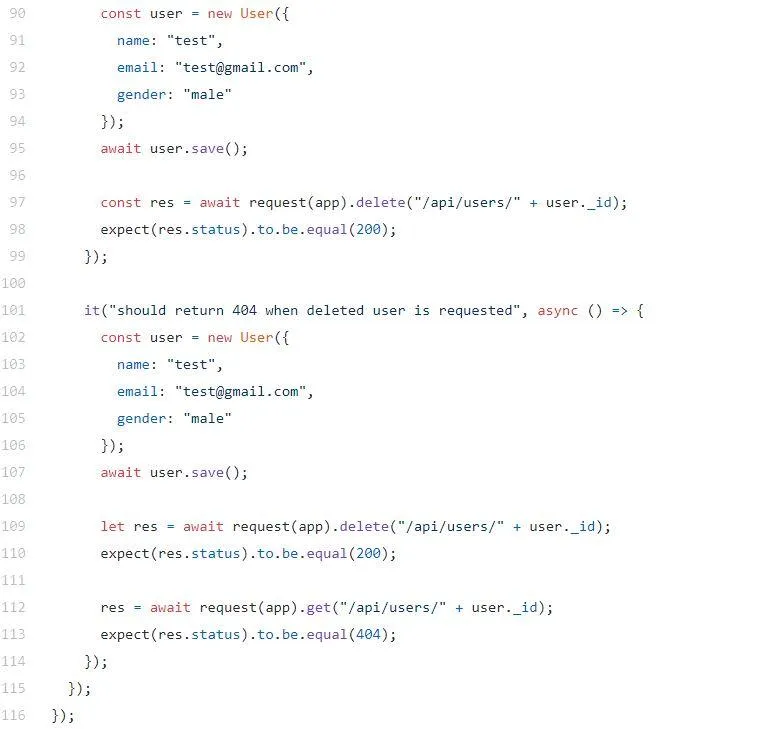

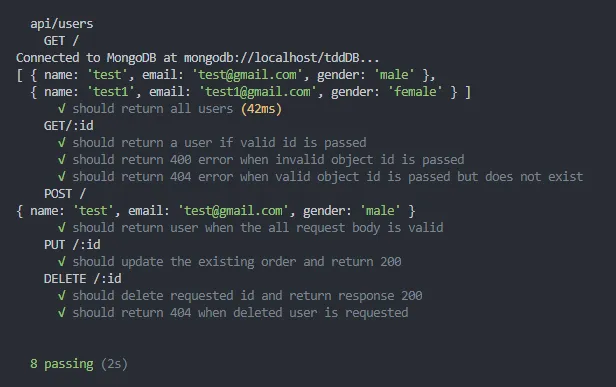

Now add tests for the handling of POST, DELETE and PUT. We also need to add simple logic to expand them; see below-

We can add tests for making sure the CRUD operations are functional, but it is not the complete set. So, we must write additional tests for making sure the code is stable.

Let’s rerun the test.

The coding coverage

We can easily find out how skillfully we have covered the code, for this will have to use the nyc module installed before.

It helps generate a text and an HTML report and provides us with the code coverage’s visual status. So, run npm run coverage.

Since the HTML report was specified, you now have a coverage folder.

Checking the result shows an 87% code coverage, which is pretty decent!

Conclusion

The Node JS Test-Driven Development, when combined, can help achieve a higher quality of code as well as broader code coverage. For an application to run smoothly as per your expectations, these factors are of top priority. TDD will also cut out the need for tedious manual testing and it proves why the demand for hiring node js developers is increasing day by day.

They are easy to understand and bring a variety of benefits that will make a developer’s life easier. Which means you must give TDD a try!